Xiaoli Wang1, Sipu Ruan1, Xin Meng1, Hongtao Wu1, Wanze Li1, Zhanhong Sun1, Yuwei Wu1, Ceng Zhang1, Wan Su1, Chen Dong2, Cecilia Laschi12* Gregory Chirikjian12*

1Department of Mechanical Engineering, National University of Singapore, Singapore

2Department of Mechanical Engineering, University of Delaware, USA

*Principal Investigator

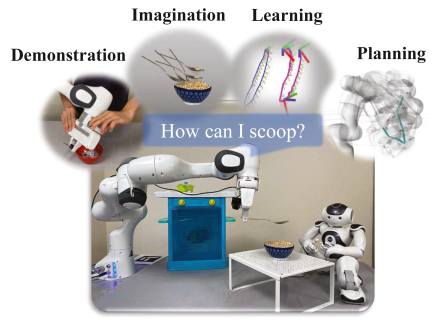

Motivation: Learning Affordances Through Play

Just like this monkey experimenting with stones, humans learn to use tools by playing, testing, and discovering their affordances. A stick can become a lever, a rock can become a hammer — but this is not taught directly. Instead, it emerges through interaction and imagination.

This project takes inspiration from this natural process. Our goal is to enable robots to:

-

Imagine possible uses of unfamiliar objects, much like humans and animals.

-

Simulate physical interactions in a virtual environment before acting in the real world.

-

Discover affordances — the actionable possibilities an object provides — without needing massive labeled datasets.

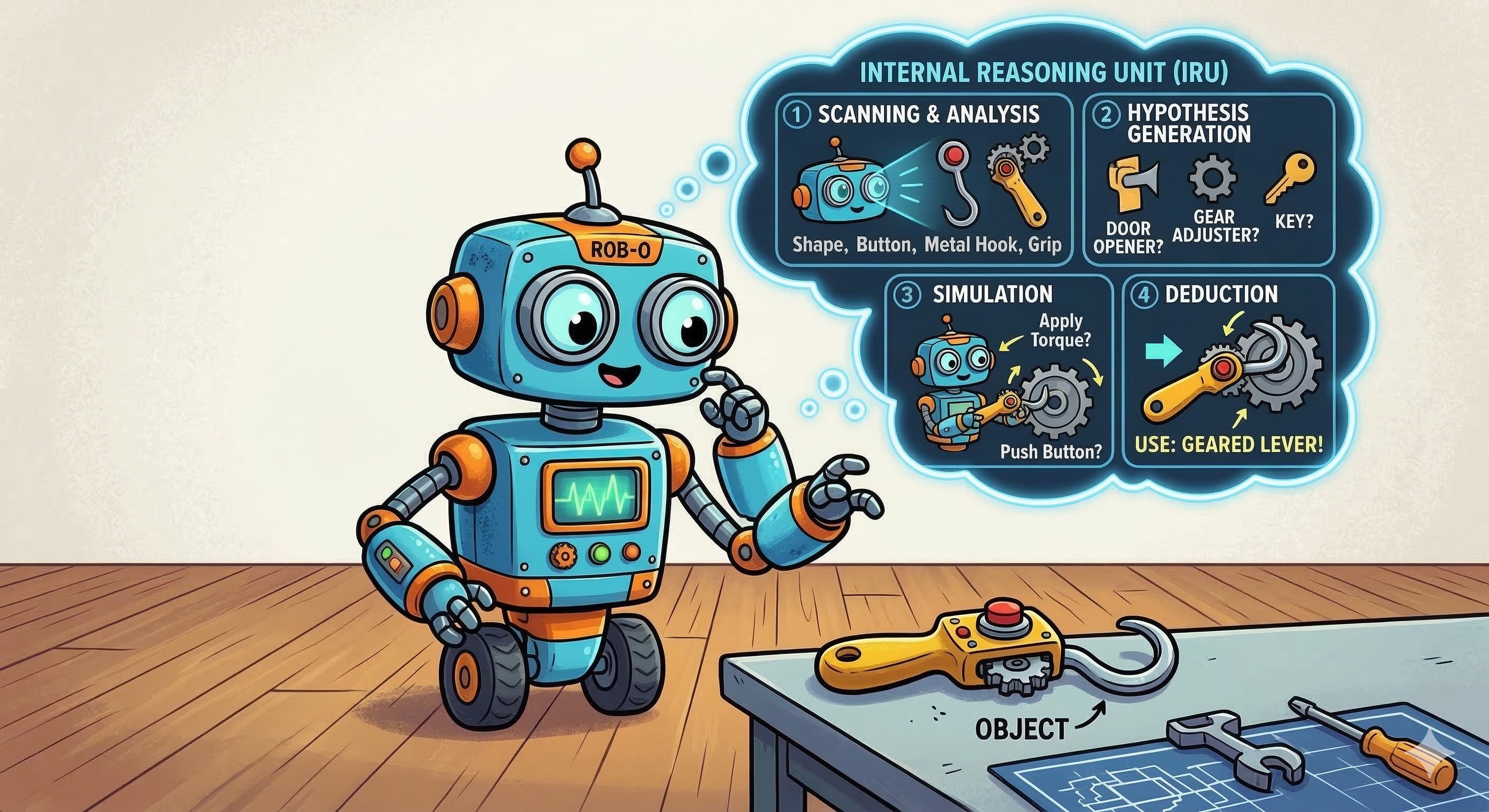

This biological analogy underpins our concept of Affordance Imagination: giving robots the ability to reason about function through simulation and exploration, the same way evolution equipped primates and humans with the ability to learn tools by trying them out.

Introduction

This website presents an affordance dictionary of common household objects and research outcomes of our group to demonstrate progress toward our funded project goal: using physical simulation to detect and reason about the affordances of objects.

Our central concept is affordance imagination — enabling robots to mentally simulate possible interactions with previously unseen objects. By integrating physics-based reasoning, geometric analysis, and learning methods (from demonstrations and large language models), our robots can classify novel objects, predict functional poses, and execute manipulation strategies without relying on massive amounts of training data.

The works presented here illustrate how affordance imagination bridges the gap between theory and practice: from seating a teddy bear on a previously unseen chair, to predicting hanging poses of tools, to capping containers, to leveraging LLMs for task decomposition. Together, these efforts chart a path toward safe, generalizable, and intelligent robot interaction in household and healthcare environments.

Object Affordance Dictionary

This section is designed as a compact catalog for common household objects that can support affordance reasoning, simulation, and demonstration. Each entry records the object category, the 3D asset link, the main affordance, reasoning analysis by LLM, the dominant interaction pattern, and the corresponding imagination video in simulation.

assets/models/ in .obj and .glb format, affordance reasoning profiles under assets/profiles/, and demonstration videos under assets/videos/. Click a model path to open the 3D viewer, analysis.txt to read the corresponding reasoning or the mp4 video to visualize the imagination process in PyBullet simulation. To achieve generalized affordance reasoning, we develop a framework integrating LLMs for novel object imagination and you can find our demo code in ImaginationWithLLM.

| ID | Object Name | 3D Model | Primary Affordance | Affordance Reasoning | Interaction Pattern | Video |

|---|---|---|---|---|---|---|

| 01 | Ashtray | assets/models/ashtray.glbClick to view 3D model | Collect ash and small discarded items | analysis.txtClick to read reasoning | grasp-rimplace-on-surfacedrop-into | assets/videos/ashtray.mp4Click to open video |

| 02 | Basin | assets/models/basin.glbClick to view 3D model | Contain water or loose objects | analysis.txtClick to read reasoning | grasp-rimcarryfill/pour | assets/videos/basin.mp4Click to open video |

| 03 | Basket | assets/models/basket.objClick to view 3D model | Store and transport household items | analysis.txtClick to read reasoning | grasp-handlecarryload/unload | assets/videos/basket.mp4Click to open video |

| 04 | Bathtub | assets/models/bathtub.glbClick to view 3D model | Contain a body for washing or soaking | analysis.txtClick to read reasoning | approachstep-insupport-body | assets/videos/bathtub.mp4Click to open video |

| 05 | Bed | assets/models/bed.glbClick to view 3D model | Support lying, resting, and sleeping | analysis.txtClick to read reasoning | approachsit/liereposition-bedding | assets/videos/bed.mp4Click to open video |

| 06 | Bottle | assets/models/bottle.glbClick to view 3D model | Store and pour liquid | analysis.txtClick to read reasoning | wrap-grasptilt-pourset-down | assets/videos/bottle.mp4Click to open video |

| 07 | Bowl | assets/models/bowl.glbClick to view 3D model | Contain food or small items | analysis.txtClick to read reasoning | grasp-rimcarryplace | assets/videos/bowl.mp4Click to open video |

| 08 | Box | assets/models/box.glbClick to view 3D model | Enclose, store, and organize contents | analysis.txtClick to read reasoning | grasp-sideopen/closepack | assets/videos/box.mp4Click to open video |

| 09 | Chair | assets/models/chair.glbClick to view 3D model | Support sitting posture | analysis.txtClick to read reasoning | grasp-backrestpull/pushsit-support | assets/videos/chair.mp4Click to open video |

| 10 | Cubby | assets/models/cubby.glbClick to view 3D model | Compartmentalize and store objects | analysis.txtClick to read reasoning | insertretrieveorganize | assets/videos/cubby.mp4Click to open video |

| 11 | Cup | assets/models/cup.glbClick to view 3D model | Contain and transport a drink | analysis.txtClick to read reasoning | grasp-sideliftdrink/pour | assets/videos/cup.mp4Click to open video |

| 12 | Cupboard | assets/models/cupboard.glbClick to view 3D model | Store protected household items | analysis.txtClick to read reasoning | open-doorshelveclose-door | assets/videos/cupboard.mp4Click to open video |

| 13 | Display Stand | assets/models/display.glbClick to view 3D model | Present and support an object visibly | analysis.txtClick to read reasoning | place-objectstabilizereposition | assets/videos/display.mp4Click to open video |

| 14 | Ladle | assets/models/ladle.glbClick to view 3D model | Scoop and transfer liquid | analysis.txtClick to read reasoning | grasp-handledippour-out | assets/videos/ladle.mp4Click to open video |

| 15 | Plate | assets/models/plate.glbClick to view 3D model | Support and present food | analysis.txtClick to read reasoning | pinch-edgecarry-flatset-down | assets/videos/plate.mp4Click to open video |

| 16 | Pot | assets/models/pot.glbClick to view 3D model | Contain and heat ingredients | analysis.txtClick to read reasoning | grasp-handleliftpour | assets/videos/pot.mp4Click to open video |

| 17 | Riser | assets/models/riser.glbClick to view 3D model | Raise an object to a higher level | analysis.txtClick to read reasoning | place-underelevatestabilize | assets/videos/riser.mp4Click to open video |

| 18 | Shelf | assets/models/shelf.glbClick to view 3D model | Support stored objects vertically | analysis.txtClick to read reasoning | place-objectstackretrieve | assets/videos/shelf.mp4Click to open video |

| 19 | Shoe Rack | assets/models/shoe_rack.glbClick to view 3D model | Organize and store footwear | analysis.txtClick to read reasoning | place-pairalignretrieve | assets/videos/shoe_rack.mp4Click to open video |

| 20 | Stool | assets/models/stool.glbClick to view 3D model | Support sitting or standing reach | analysis.txtClick to read reasoning | top-grasprepositionstep/sit | assets/videos/stool.mp4Click to open video |

| 21 | Table | assets/models/table.glbClick to view 3D model | Support placement and workspace use | analysis.txtClick to read reasoning | place-objectlean-supportpush | assets/videos/table.mp4Click to open video |

| 22 | Trash Bin | assets/models/trashbin.glbClick to view 3D model | Receive and contain waste | analysis.txtClick to read reasoning | drop-intograsp-rimrelocate | assets/videos/trashbin.mp4Click to open video |

| 23 | TV Stand | assets/models/tv_stand.objClick to view 3D model | Support media devices at viewing height | analysis.txtClick to read reasoning | place-objectorganize-cablesreposition | assets/videos/tv_stand.mp4Click to open video |

| 24 | Vase | assets/models/vase.objClick to view 3D model | Hold flowers or decorative stems | analysis.txtClick to read reasoning | grasp-bodyinsert-stemsplace-display | assets/videos/vase.mp4Click to open video |

| 25 | Wine Glass | assets/models/wine_glass.objClick to view 3D model | Contain and present a beverage delicately | analysis.txtClick to read reasoning | pinch-stemliftsip/place | assets/videos/wine_glass.mp4Click to open video |

Related Works

Put a Lid on It! A Learning-Free Method to Cap a Container via Physical Simulations (UR 2025)

Develops a simulation-based method for matching unseen containers and lids. Uses Gaussian process distance fields and matching imagination to achieve over 91% success rate. Code.

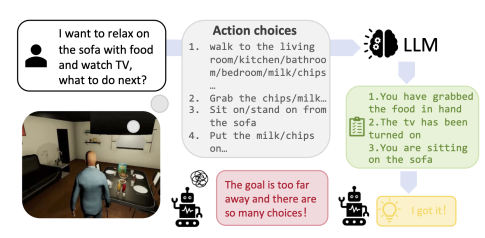

Goal-Guided Reinforcement Learning: Leveraging Large Language Models for Long-Horizon Task Decomposition (ICRA 2025)

Proposes G2RL, where LLMs generate subgoals to help reinforcement learning explore efficiently in long-horizon tasks. Validated across simulated environments with improved convergence. Code.

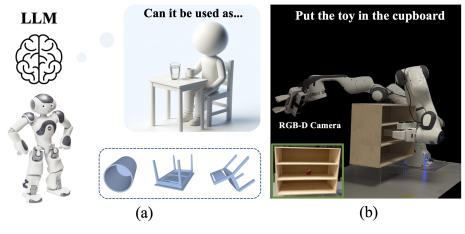

RAIL: Robot Affordance Imagination with Large Language Models (ISER 2025)

Combines LLMs with physics-based simulation for automatic affordance reasoning. From minimal semantic input, robots classify novel objects, predict functional poses, and perform unseen tasks. Code.

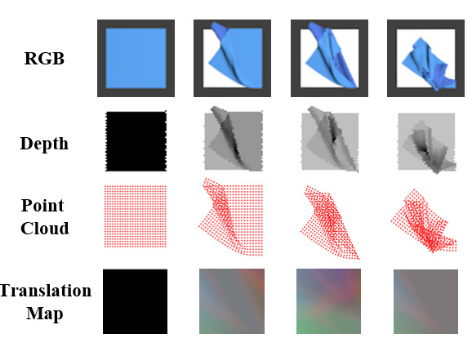

RaggeDi: Diffusion-based State Estimation of Disordered Rags, Sheets, Towels and Blankets (arXiv 2024)

Applies diffusion models for cloth state estimation. Represents cloth deformation as a translation map and achieves superior accuracy in both simulation and real-world experiments. Code.

PRIMP: PRobabilistically-Informed Motion Primitives for Efficient Affordance Learning from Demonstration (T-RO 2024)

Introduces PRIMP, which learns probabilistic motion primitives from demonstrations and integrates them with workspace-aware motion planning (Workspace-STOMP). Demonstrated on tool-use and affordance-based tasks. Code.

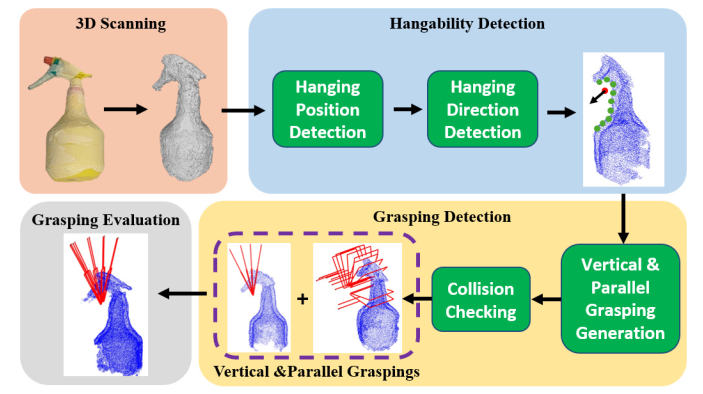

Grasping by Hanging: A Learning-Free Grasping Detection Method for Previously Unseen Objects (arXiv 2024)

Introduces Grasping-by-Hanging (GbH), a learning-free approach where robots detect hangable structures to derive grasping poses. Particularly effective for thin or flat objects. Code.

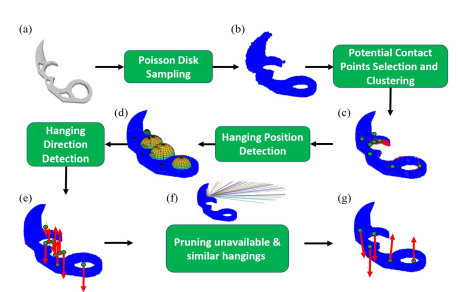

I Get the Hang of It! A Learning-Free Method to Predict Hanging Poses for Previously Unseen Objects (RA-L 2024)

Proposes a learning-free framework for predicting stable hanging poses using geometric and mechanical analysis. Outperforms learning-based baselines in simulation and real-world tests. Code.

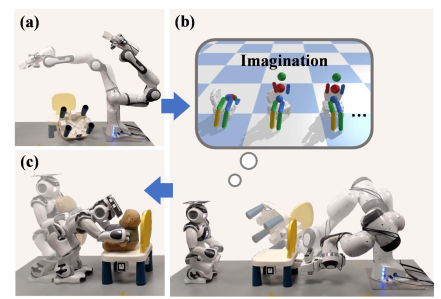

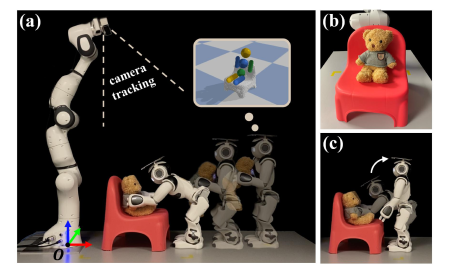

Prepare the Chair for the Bear! Robot Imagination of Sitting Affordance to Reorient Previously Unseen Chairs (RA-L 2023)

Robots reconstruct previously unseen chairs, simulate their sitting affordance, and reorient them so a humanoid agent (teddy bear proxy) can be seated. Demonstrates object classification and functional pose prediction via physics-based imagination. Code.

Put the Bear on the Chair! Intelligent Robot Interaction with Previously Unseen Chairs via Robot Imagination (arXiv 2022)

Extends chair affordance reasoning by enabling a humanoid robot to physically seat a teddy bear. Introduces a human-assistance module to adjust inaccessible chair poses via natural language instructions. Code.